Using Evidence to Determine the Correct Chemical Equation: A Stoichiometry Investigation

During our stoichiometry unit, I wanted my students to take part in an engaging investigation. Many of the stoichiometry labs I had done in the past followed more of a traditional structure involving something like, “here is the reaction…predict how much…do the reaction…compare to prediction…determine % yield.” While merit for such a lab can be argued for, I really wanted to immerse my students in an actual investigation that more accurately reflected the scientific skills I try to advocate for—experimental design, data collection, analysis, creating an argument from evidence, engaging in argument, etc. To achieve this, I opened my Argument-Driven Inquiry in Chemistry (ADI) book and happened to find a wonderful example.

Which Balanced Chemical Equation Best Represents the Thermal Decomposition of Sodium Bicarbonate?1

Though I had used a version of the decomposition of sodium bicarbonate lab in our stoichiometry unit for years, with consistent results, what the ADI book provided was a surprisingly different and more creative approach.

The students are provided with four different balanced chemical equations that could explain how the atoms are rearranged during this decomposition.

Option 1: NaHCO3 (s) à NaOH (s) + CO2 (g)

Option 2: 2NaHCO3 à Na2CO3 (s) + CO2 (g) + H2O (g)

Option 3: 2NaHCO3 (s) à Na2O (s) + 2CO2 (g) + H2O (g)

Option 4: NaHCO3 (s) à NaH (s) + CO (g) + O2 (g)

Their task: Figure out which balanced chemical equation accurately represents the decomposition of sodium bicarbonate.

At first glance, the ingenuity of this challenge was not completely obvious to me. As chemistry teachers, the depth of our content knowledge allows us to systematically rule out three of the reactions without even performing the experiment. Even if we did need to do the investigation ourselves to determine the right equation, our experience in the lab and overall scientific literacy allows us to easily come up with a plan and identify exactly what we should be looking for.

However, our students are novices. They lack the content knowledge and they most certainly lack the laboratory skills to easily generate a plan for arriving at an evidence-based answer. While they might be lacking in these areas, they are not completely clueless. They know just enough to successfully accomplish their task, even if they do not immediately start making connections to content already learned. At the same time, their lack of knowledge prevents them from confidently knowing the correct reaction prior to investigation.

After thinking about it a bit, there were at least five distinct features that convinced me to pursue this lab.

1) Their lack of prior knowledge makes all four options appear plausible.

We had just finished our reactions unit, so they were all familiar with the generalized pattern that a decomposition reaction follows.

AB à A + B

To the students, this reactant is a complete curveball. As novices, they have no idea how to confidently predict the products of such a reaction. You know their gut instinct will be to suggest it decomposes into sodium and bicarbonate. As absurd as this seems to you and me, it seems plausible to many of them. Even though I could have given them a brief explanation as to why something like this would not decompose in this manner, I did not need to since it is not even offered as a potential equation—away with bicarbonate!

2) Application of stoichiometry

Since we were nearing the end of our stoichiometry unit, this was a perfect application. Though some of the products can be easily determined qualitatively, stoichiometry will need to be applied when trying to identify the solid product that remains. The use of stoichiometry to generate sufficient evidence that will support their eventual conclusion will be the meat of their argument.

3) Application of qualitative evidence

During our reactions unit, they had learned about testing for certain gases using a flame test. Because of this, many of them remembered that they could identify the presence of CO2 and O2 based on what happened when a lit splint was placed into the test tube.

4) Developing an argument from evidence

Sometimes it is hard to reduce, let alone eliminate, previous conceptions and biases when asking students to develop an argument from evidence. However, since each reaction appears equally plausible from the perspective of the student, this meant the evidence gathered was the primary driver behind the construction of their argument. They could not rely on prior knowledge alone simply because they lacked sufficient prior knowledge that would allow them to know what the products should be without even performing the investigation.

5) Student-driven experimental design

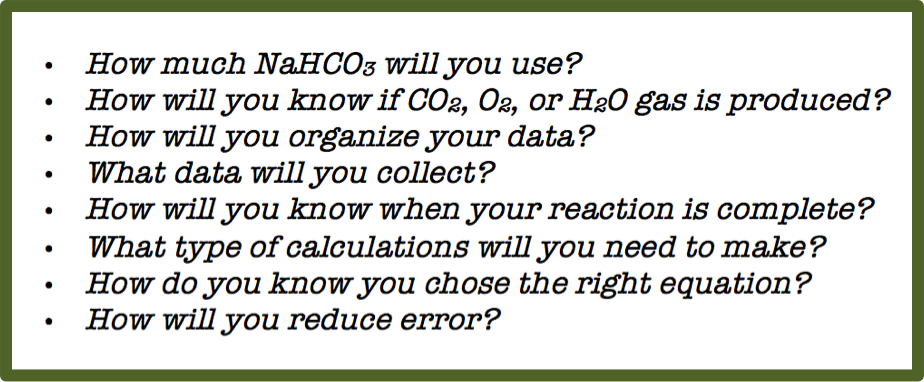

Though I demonstrated some basic safety tips, how to set up their apparatus, and the general approach to performing the reaction, the bulk of the experimental design was going to be on them. For the first time, they actually needed to consider questions such as:

Figure 1- Example questions from the ADI book

With respect to materials, I gave them the following list of equipment and the chemical they were going to consume.

Consumable: Solid NaHCO3

Equipment: Bunsen burner, lighter, test tube, glass stir rod, tongs, electronic balance, periodic table

When they were finished, each group was required to produce a whiteboard that resembled the following structure:

Figure 2 - Whiteboard Template

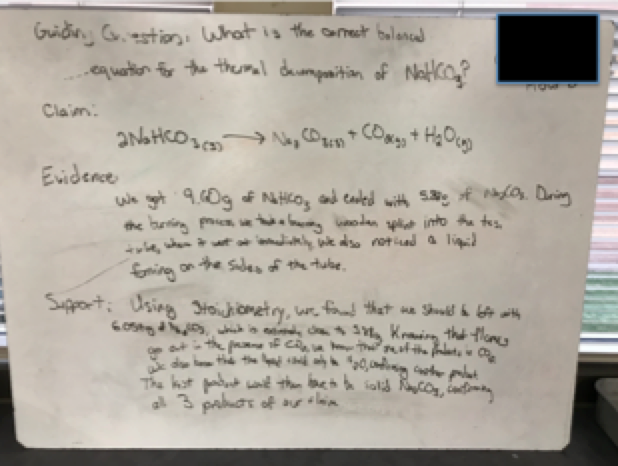

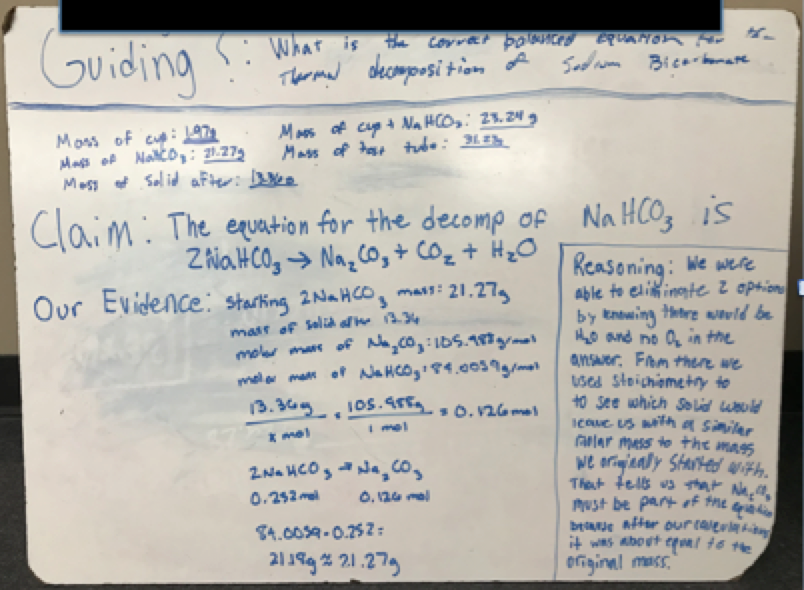

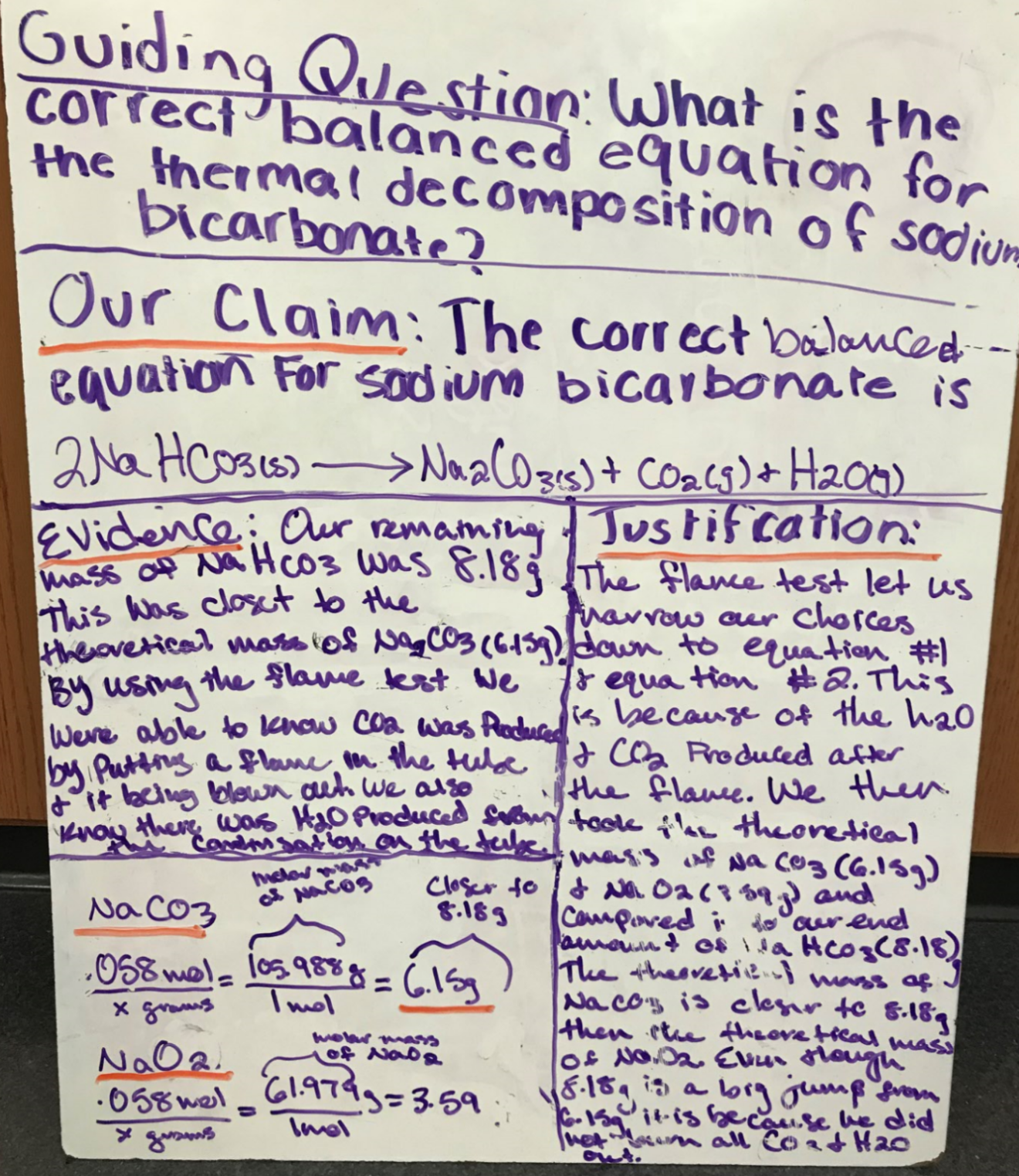

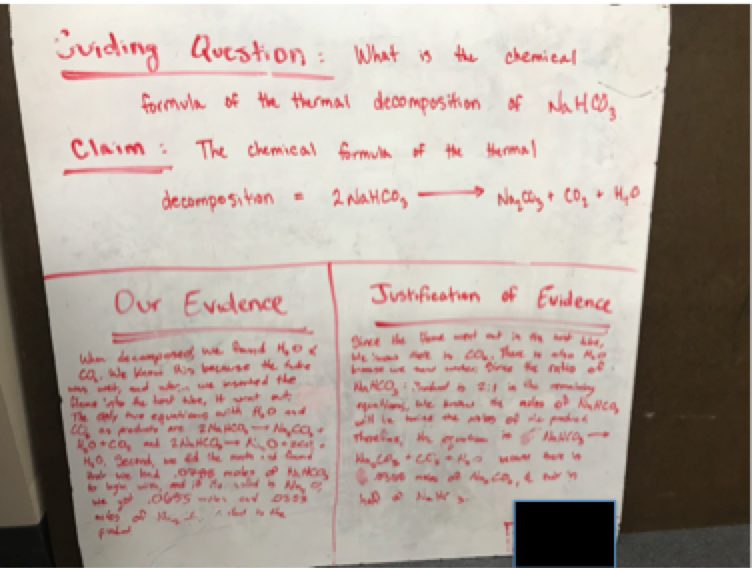

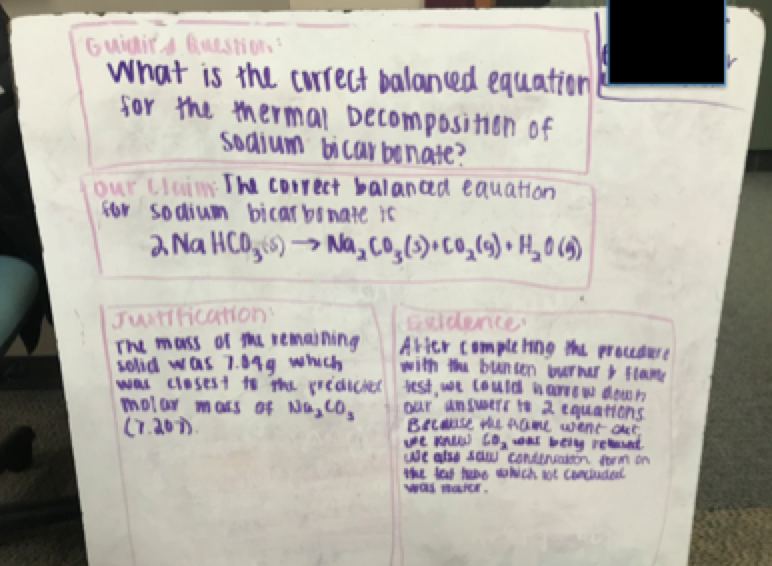

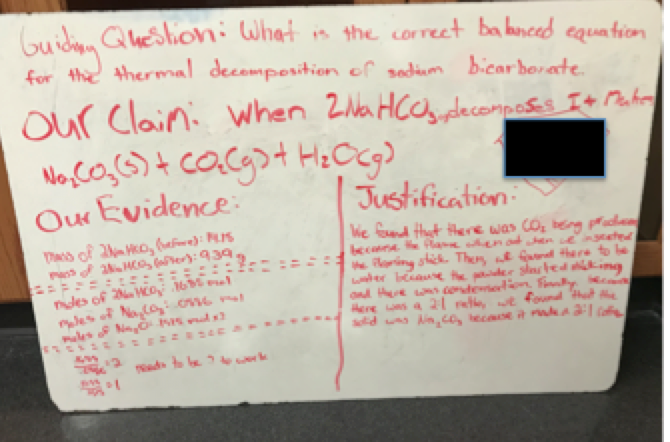

Student Whiteboards

While the method used for groups to communicate their argument to others can easily vary from teacher to teacher, I decided to have 2-3 groups come together and present their findings to each other. It was exciting listening to their conversations that would sometimes lead to genuine discourse, as opposed to a one-way presentation of results. As a teacher, my favorite scenario was when different groups would have different conclusions and, consequently, different chemical reactions proposed. Hearing them use their knowledge of stoichiometry to justify why their results made sense or pointing out the faulty reasoning in another group’s results was something I wish I had recorded.

I had mentioned that one of the characteristics I liked about this lab was the student involvement in experimental design. Even though this feature is a hallmark trait of every ADI-themed lab, it was still a relatively new experience for me. Each group was given approximately 20 minutes to come up with an outline for their experimental design. While I had previously shown them how to safely perform the reaction, I gave them no guidance on what data to collect or even how to collect it. I did not tell them how long to heat their sample or what to look for when determining if the reaction is complete. This really threw them off and I could sense the frustration from several groups because, for once, I was not spoon feeding them every single detail of each step in the procedure.

Class Discussion

By letting them take ownership of their experimental design, a few things happened to some groups that served as a learning experience and opportunity for discussing the importance of experimental design within the scientific process.

1. Some groups simply did not heat their sample for a long enough time. Doing so resulted in a much higher product mass than they had predicted since there was still unreacted sodium bicarbonate in the test tube. When trying to explain how their percent yield was over 100%, several groups initially struggled to realize they had just simply stopped the reaction too soon. This made for good conversation regarding experimental error.

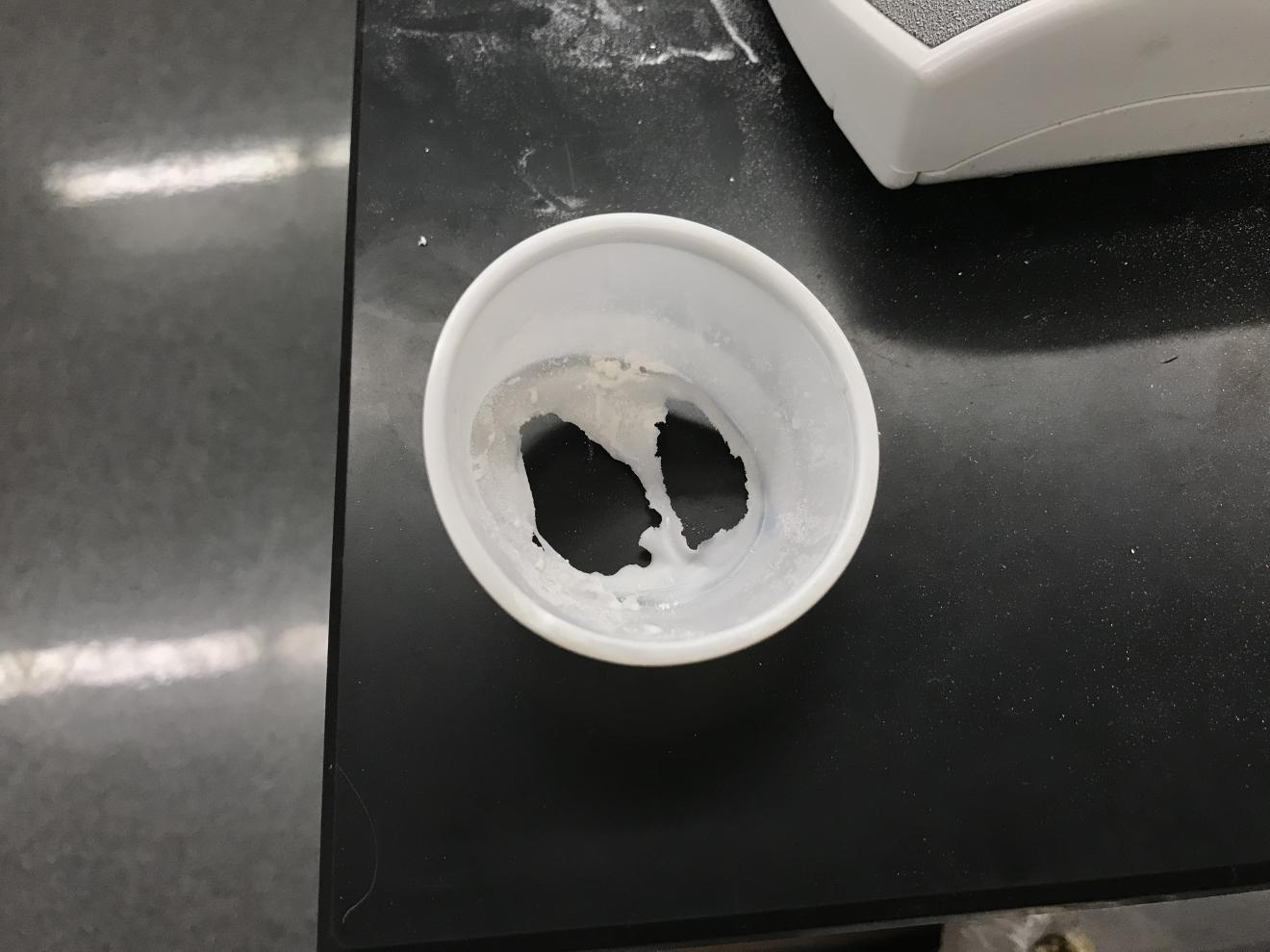

2. I was truly amazed by how many groups did not take the time to think about how they were going to collect their mass data. Everyone knew they needed the mass before and after, but several groups never considered exactly how they were going to do this. Some groups only recorded the mass of their sodium bicarbonate, without considering the mass of their test tube and glass stir rod. Doing so meant that, when their reaction was complete, they were going to empty the contents of their test tube into a plastic weighing container to collect the final mass. What they did not consider was that the contents of their test tube were going to still be really hot. So, when they transferred their product to the container that was on the scale, their product literally melted right through the container! (See below) Now there was product all over the scale and on the table. Groups that did this immediately recognized the flaw in their experimental design and I honestly saw it as a wonderful learning opportunity.

Figure 3 - Hot samples melt plastic.

3. Some groups literally filled half of their test tube with sodium bicarbonate. Doing so resulted in the reaction seemingly taking forever to complete. In addition, since they started with so much reactant, they never really considered the decrease in probability of successfully using up all their reactant.

In Conclusion

While most groups executed the experiment without major flaws, I was reminded of the importance in giving them experiences that provide opportunities for failure and reflection in the lab. Students need to experience the fact that science is not just a linear process driven by knowing exactly what to do and exactly what to expect every step of the way without hiccups. Sometimes our experiments fail or produce results that do not make sense. When this happens, we think about how we can improve the experiment and do it over again. After accounting for our mistakes, if we are still surprised by the results, maybe there is something new to learn about the nature of reality!

Students need to experience the fact that science is not just a linear process driven by knowing exactly what to do and exactly what to expect every step of the way without hiccups.

Overall, the lab itself took anywhere from 20-30 minutes to set up and execute. Students had the remaining 20-30 minutes of class to analyze their results and develop their initial argument; which was going to be finalized and communicated the following day.

Whether you are looking to add a bit more scientific inquiry to your labs or simply looking for a great stoichiometry lab that can be added to your collection, I encourage you to try something like this with your students!

Though you will need to purchase the Argument-Driven Inquiry in Chemistry book to access the full teacher handout and notes, you can find a free student-version lab handout online. I also included it in the Supporting Information below.

Editor's Note: Readers may be interested in reading a Pick about Argument-Driven Inquiry in Chemistry posted Chad Bridle.

Resources

1 Argument-Driven Inquiry in Chemistry. Stoichiometry and Chemical Reactions: Which Balanced Chemical Equation Best Represents the Thermal Decomposition of Sodium Bicarbonate? NSTA Press, 2015, pp. 426 – 441.

Comments

9Wow

Ben - Absolutely love this idea. Going to try this with my kids perhaps instead of a test. Thanks so much. Can't wait to try it.

In reply to Wow by Chad Husting

Potential practicum?

Chad,

Great idea! Lauren Wester had suggested possibly using it as a practicum for her stoichiometry unit. I love that idea. I hadn't even thought about that. It hits on so many skills from that unit that it could easily serve as a meaningful assessment. Thanks for reading!

Adding lab related questions to end of unit assessments

Thanks for the great discussion, Ben. I also like the practicum idea, but when time has been an issue, I have followed up a lab like this with a lab quiz or related questions on a unit test. I would pick a different reaction (in this case, the decomposition of sodium chloriate would work) and provide possible balanced equations like the original lab does. Most importantly, I would provide a completed data table. Then, I would ask students to choose the correct balanced equation after they consider the data table.

In reply to Adding lab related questions to end of unit assessments by Deanna Cullen

great minds think alike...

So true. In fact, that's exactly what I did this year!

lab assessment?

I have tried to add more CER labs to my curriculum as well, but am struggling on how to assess them. Did you give them a "grade" for the lab work or do a post-lab assessment? I love the idea, and I think presenting to others helps to reduce those that are coat-tail riders.

Thanks!

In reply to lab assessment? by Karen Collins

grading lab work

Karen,

Love that you're incorporating more CER stuff into your curriculum! The grade that was given to them was based on the legitimacy of their CER, which was written on their whiteboard. We have our own rubric that we use as a department for grading CERs. I'm certainly open to grading it in a more comprehensive way, which may include their ability to develop a procedure, organize and gather appropriate data, and present their argument.

Share Your Thoughts